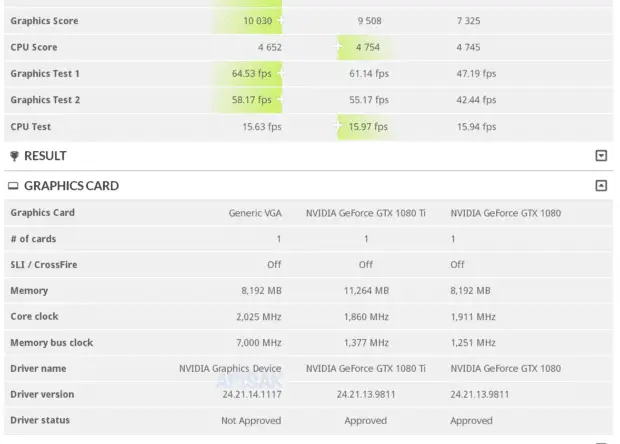

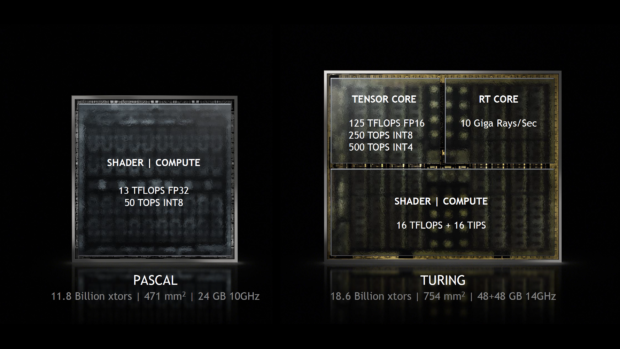

This is exactly the test in the 3DMark Time Spy benchmark, which we should read with a pinch of salt. I am talking about probably not the latest graphics drivers and the absence of AI computations (as part of DLSS rendering), which is simply caused by the test that is not adapted to the new generation. That will change soon. So how does the GeForce RTX 2080 do without using 1/3 of its capabilities hidden in the TU104 core? I mean the Tensor and RT cores, which were revealed in the detailed presentation of the Turing core.

The above numbers don’t tell you much, so I’m in a hurry to explain. The RTX 2080 achieved 37% better results than the GTX 1080 and 6% better results than the GTX 1080 Ti. A little better? Surely! Especially since it is not some AI calculations that are at stake, but raw performance from CUDA cores. This one looks really good and can only increase with driver optimization. And this is with the previously unexpected clock with a clock speed of 2025 MHz! I would like to remind you that in the case of the RTX 2080, the test did not involve… 1/3 of the core surface. These enter the game only with the use of DLSS and Nvidia RTX rendering techniques.

Source: Wccftech

Pictures: TumApisak, Nvidia