Taking pictures or recording videos is very enjoyable, but in this area there is one thing that annoys like nothing. When we zoom in on the image, at some point it will start to become blurry and invisible. It turns out that this may change soon.

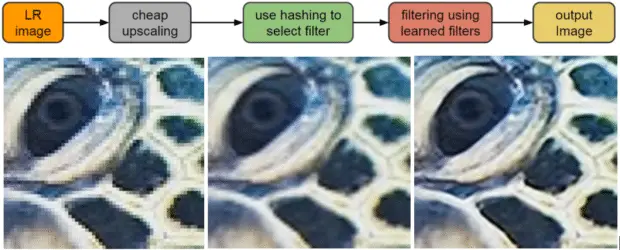

Images are made up of tiny dots called pixels. The more (higher resolution) there are, the more details you can see. You can also always reach a point where only a checkerboard of pixels is visible on the screen. Google is trying to change this through the use of machine learning techniques. The company reportedly invented a technique that allows details to be reconstructed in low-resolution images. It was called RAISR (rapid and accurate image super-resolution). It is based on program learning on the so-called the edges of the image (parts of an image with drastic changes in the gradient of color and brightness) and tries to recreate them. Low image resolution is therefore examined by a machine trying to find a way to upscale it to a higher resolution.

If Google succeeds in this project, it will find many applications. What comes to my mind is good scaling of movies, games or images to higher resolutions, e.g. 4K or in the future 8K. Another thing is to re-analyze old or damaged photos or recordings, from which it will be possible to extract much more interesting information. And what would you see an application for this method?

Source: https://www.techpowerup.com/, https://research.googleblog.com/