Probably each of you once faced the choice of a new SSD drive and looking at individual capacities, wondered why the performance grows with more available space. Is it an evil plan of the producer to increase his profits, forcing us to choose a more capacious medium?

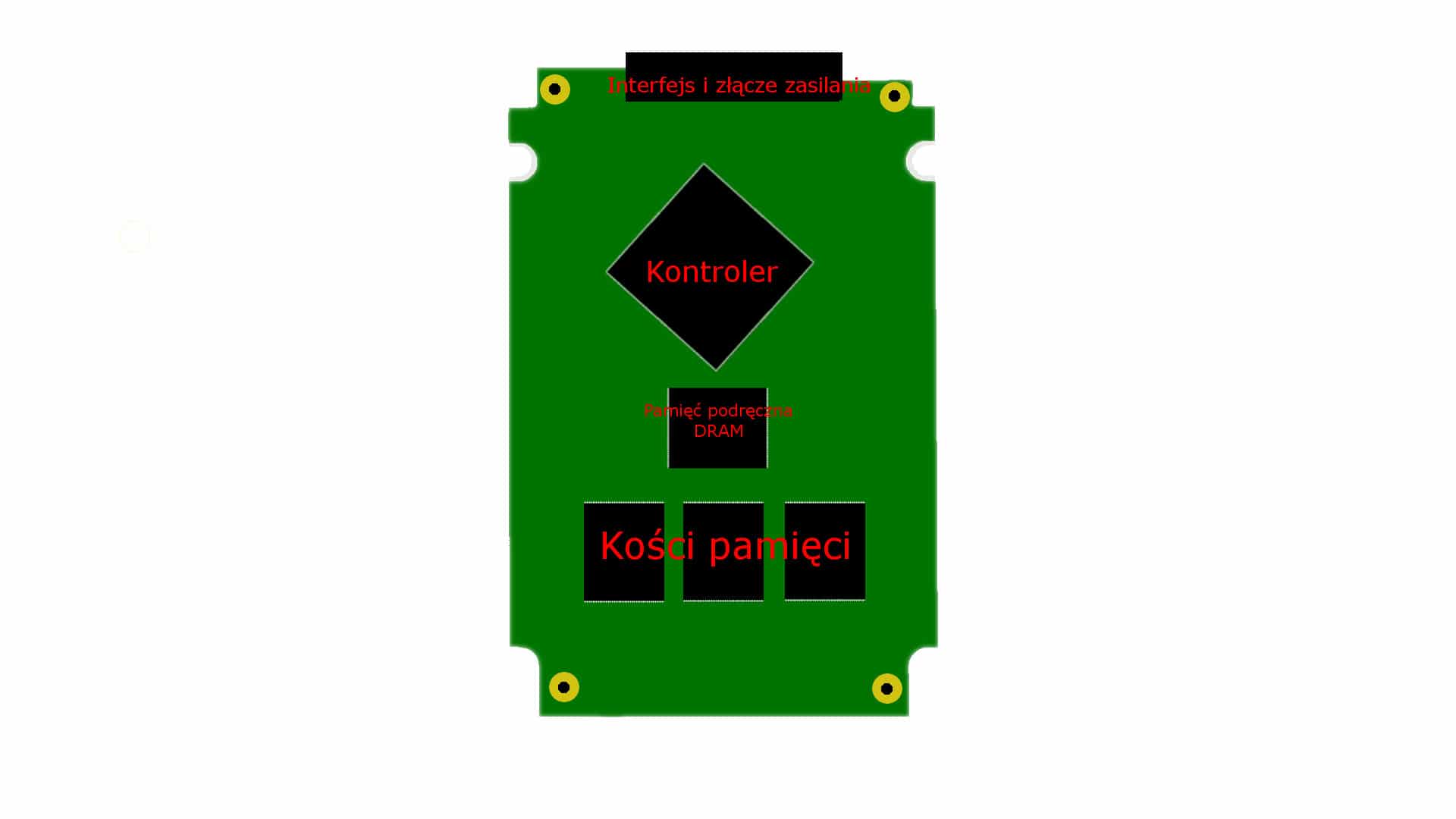

To understand the genesis of this phenomenon, we need to delve into the very structure of a semiconductor disk (SSD). Under its housing there are, among others memory bones and the controller that manages them, which is responsible for the data writing and reading mechanism. In most cases, these two elements determine the capacity and speeds of all SSDs. However, we cannot forget about other components, such as the cache memory that works directly with the controller.

For a proper comparison, it is also worth mentioning two words about traditional discs. In them, the role of memory bones is played by constantly rotating platters, from which information is read and written by a physically changing head, i.e. a kind of equivalent of an SSD controller. Despite the fact that the number of plates may increase (which only results in greater capacity), the operating set of mechanical heads will be able to focus with full efficiency on only one task. The case is completely different in the case of SSD drives. The controller present there can write to and read data simultaneously from several memory chips, which just determine the available space.

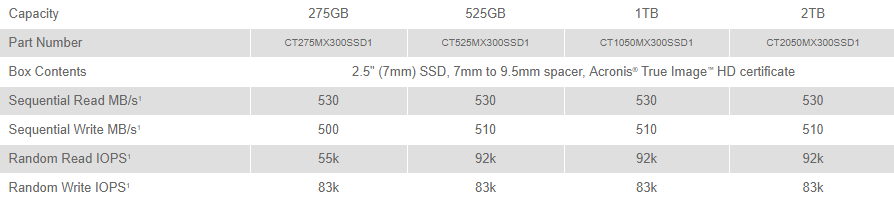

In other words, the more bones the manufacturer puts on the laminate, the more performance the disk will offer in specific tasks. Of course, as long as the implemented controller will be able to handle all of them. This is also what most manufacturers use – let’s imagine a 128 GB medium, which consists of four memory chips with a capacity of 32 GB each. Parallel scooping (and saving) information from four sources must be much slower than doing it with e.g. eight or sixteen. This is why SSDs with different capacities in the same series have in most cases different results in random and sequential write and read operations. Due to the possibility of using more memory cells (which is possible thanks to Garbage Collection technology and TRIM commands) even their overall lifespan grows.

Of course, the larger they are, the higher the difference becomes. However, in some cases, such a rule is not confirmed, because instead of increasing the amount of memory chips, the manufacturer replaces them with larger versions, or omits the issue of improving the controller. Then this one may not be able to deal with all the bones at one moment and will constitute the so-called “bootleneck“. Just like in the case of heads in HDDs.

Hundreds of bones on one laminate?

Anticipating the questions – the solution of creating an SSD drive consisting of hundreds of memory bones does not exist. All because of the so-called “the Golden mean“, Which determines the performance of individual silicon chips (because they play the role of these bones) and the production costs themselves. Believe it or not, producing a hundred products is much more expensive than producing one, even much more efficient version. It’s just like a set of french fries in restaurants. Price differences between smaller and larger portions are negligible because their production depends on a number of factors.