NVIDIA Hopper, production goes live: H100 officially debuts in October

The attention of gaming enthusiasts in the last few hours has undoubtedly been catalyzed by the Ada Lovelace architecture and the announcement of the GeForce RTX 4090 and 4080 cards. The event, however, reserved much more, including the announcement that NVIDIA H100, the artificial intelligence accelerator unveiled in April, is in full production. In October, NVIDIA partners will begin bringing the first wave of architecture-based products and services to market Hopper.

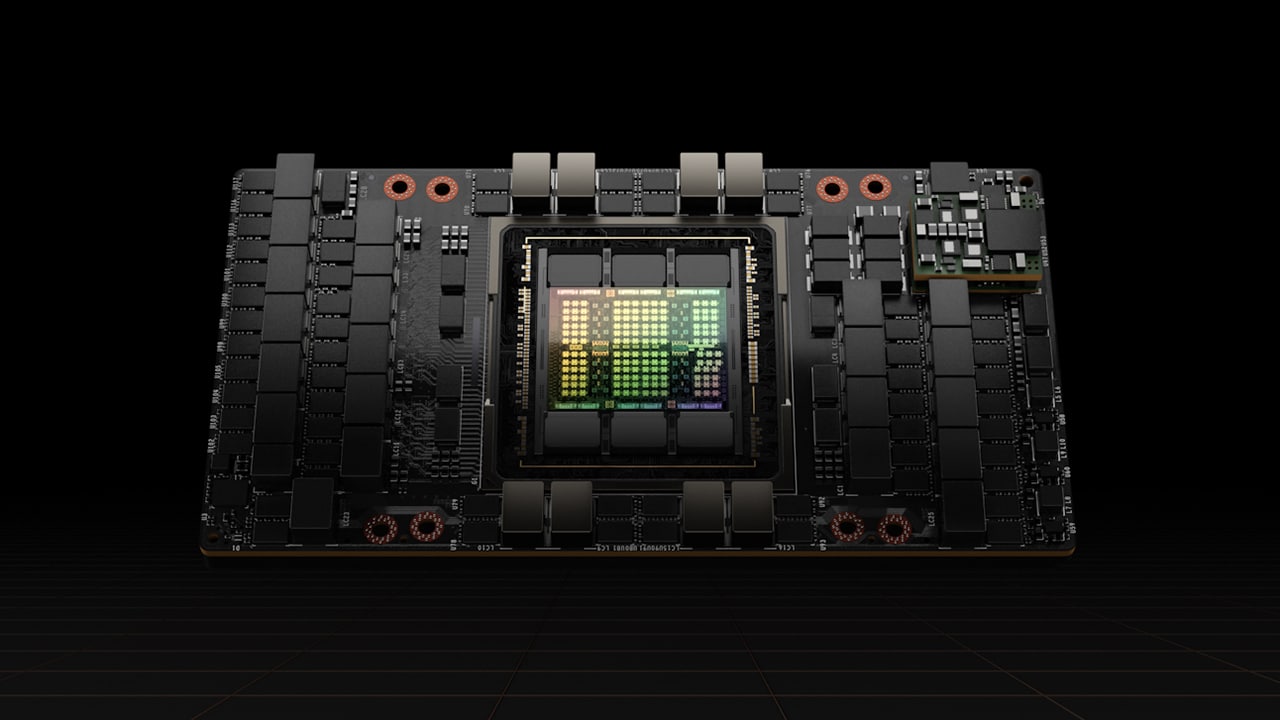

The chip Hopper on board the H100 integrates 80 billion transistors and includes many new direct to the world of AI such as the Transformer Engine, DPX instructions and much more, aspects that you can learn more in this article. According to NVIDIA, Hopper allows businesses to reduce the costs of implementing artificial intelligence offering the same performance as the Ampere generation but with great advantages: 3.5 times greater energy efficiency, a 3 times reduction in TCO (total cost of ownership) and a 5 times reduction in nodes in the server infrastructure.

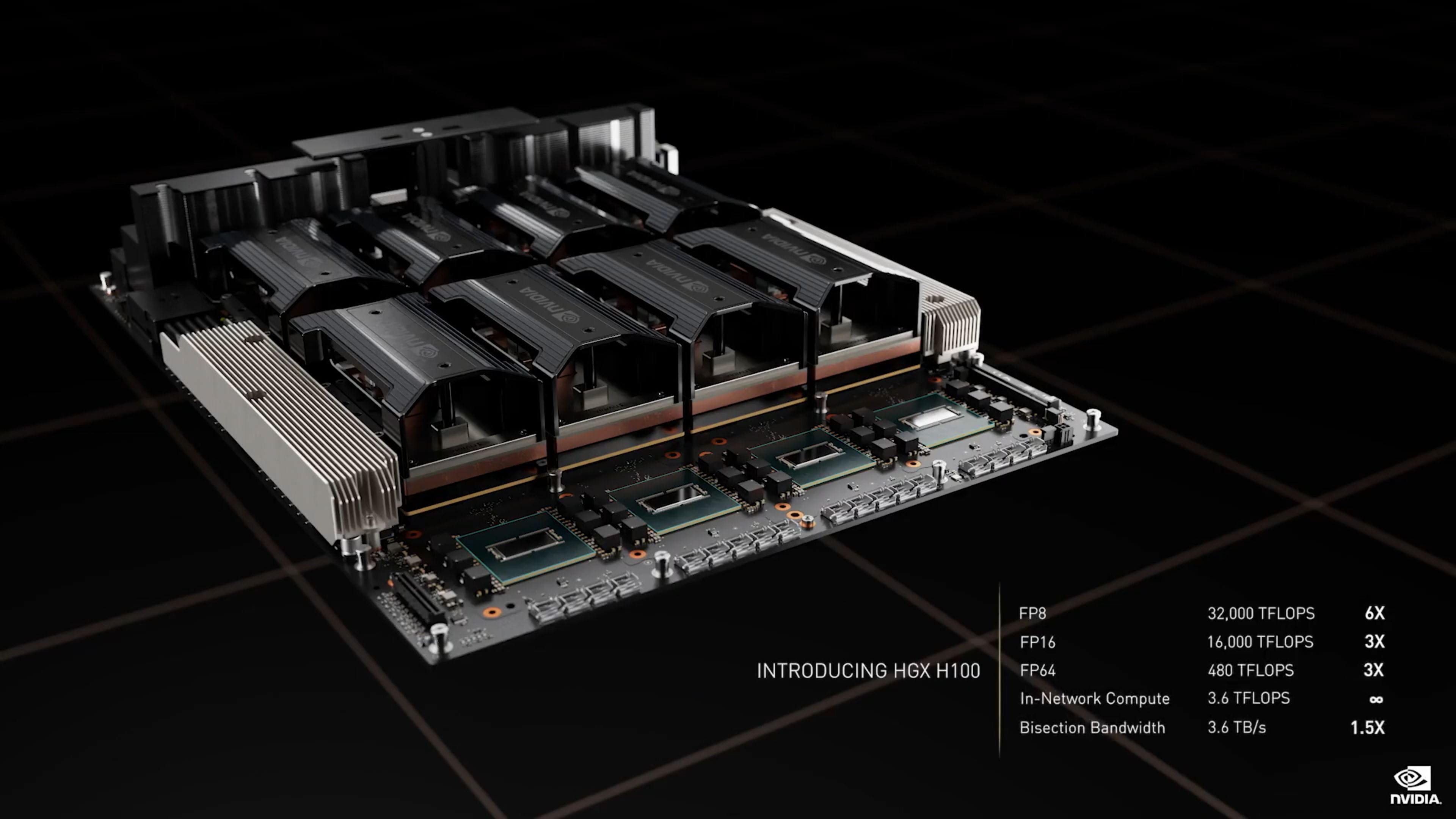

Customers can experience the technology right away with Dell PowerEdge servers with H100 available through NVIDIA LaunchPad, and orders have been opened for DGX H100 system, which internally feature eight H100 GPUs connected to each other by the fourth generation of NVLink to ensure 32 petaflops of performance with AI calculations (FP8). An Intel Sapphire Rapids CPU is also on board. In this sense, a delay: deliveries were initially planned for Q3, but will actually take place in Q1 2023, perhaps to give Intel time to build microprocessors.

The major server manufacturers, starting in the next few weeks, will start a bring about fifty servers to market, with several dozen more expected in 2023. Partners include Atos, Cisco, Dell Technologies, Fujitsu, GIGABYTE, Hewlett Packard Enterprise, Lenovo and Supermicro.

I research centers come Barcelona Supercomputing Center, Los Alamos National Lab, Swiss National Supercomputing Centre (CSCS), Texas Advanced Computing Center e University of Tsukuba hanno annunciato che they will include the H100 accelerator in their future supercomputers. As for the world of services cloudNVIDIA announced that Amazon Web Services, Google Cloud, Microsoft Azure e Oracle Cloud they will be among the first to offer H100-based instances starting next year.

No word, of course, on the export of H100 artificial intelligence accelerators to China, prevented by the US government in the absence of explicit authorization.